Frontier Labs Can't Automate Your Deliverables

Concerns over data security are increasing financial institutions' enterprise subscriptions to model providers such as OpenAI and Anthropic. These firms are keenly aware that, in the absence of zero-data-retention guarantees, employee interaction with consumer-facing versions of AI systems poses a significant risk of exposing sensitive information.

Although enterprise subscriptions may mitigate many of the data privacy concerns associated with AI adoption, general-purpose models still lack the nuanced domain-specific expertise required for high-stakes financial workflows. For instance, a financial analyst using ChatGPT to generate a retention analysis will likely receive materially different outputs across repeated attempts, an unacceptable degree of variability in an industry where cell-level precision is standard.

Altitude addresses this limitation by providing dependable agentic infrastructure for the production of financial deliverables. The platform's implementation is unique to each client, who provides the firm-specific logic, formatting, and styles that are necessary for reproducing consistent deliverables that meet their expectations every time.

To compare our platform's capabilities with those of leading model providers, we evaluated ChatGPT, Claude, Gemini, and Altitude on a series of deliverable-generation tasks.

Setup

Each platform was provided with the same series of prompts and files.

Prompts

Each platform was first evaluated on the excel analysis task and, upon completion, was then given the PowerPoint presentation task. The prompts shown below have been truncated for readability.

-

Excel Analysis Prompt

You are given two Excel workbooks: *data_room.xlsx*: contains the new source data. The recurring customer data is on the MRR tab. *model_template.xlsx*: contains a prior, firm-standard "Retention Analysis" tab that must be used as the exact template. Goal Create a new worksheet in data_room.xlsx named Retention Analysis that reproduces the Retention Analysis from model_template.xlsx, but populated with the new data from data_room.xlsx -> MRR. -

PowerPoint Presentation Prompt

You are given: *data_room.xlsx*: contains the generated Retention Analysis worksheet. *template_deck.pptx*: the firm's base deck to derive styles and formats from. Create a new PowerPoint deck that contains exactly two slides: 1. a title slide 2. a "Retention Analysis" slide with a screenshot of the Excel Retention Analysis plus commentary callouts All slide layouts, typography, colors, spacing, and graphic elements must be drawn from template_deck.pptx.

Performance

Prior to conducting the experiment, I performed a series of exploratory tests to better understand how each model behaved in practice. These preliminary trials generated important insights about the quirks of each system, which in turn informed the construction of a single prompt designed to give each model a fair opportunity to demonstrate its capabilities.

- Models Love to Cheat

The models frequently relied on shortcuts rather than performing the intended analytical task. In the earliest runs of the experiment, the template model was derived from the example data room, which caused both Claude and ChatGPT to recognize and reproduce the target worksheets instead of generating them from the underlying data. I figured a new data set would curtail this behavior, but it persisted. Considerable prompt tuning was ultimately required to discourage this form of replication. After repeated refinement, the models finally began to show reasoning that was indicative of deliberate attempts at formula generation.

- These Tasks Require Prompting Models to "continue" Their Reasoning

These tasks place substantial demands on model context windows, despite being relatively straightforward for a financial analyst. In early trials, this created multiple practical issues. Heavily loaded conversations sometimes prevented additional files from being uploaded, and long-running tasks were frequently interrupted by requests for user confirmation before generation could continue. From a workflow perspective, this is a significant limitation: analysts who expect to launch such tasks asynchronously and return to completed outputs may instead encounter stalled generations and no usable result.

These early findings informed the design of a fairer and more diagnostic evaluation. Prompts were refined to better elicit the intended behavior, and the input files were modified to contain different sets of MRR data from those used in the example materials. As a result, the experimental setup made it possible to determine from the outputs themselves whether a platform had genuinely performed the analysis or had instead relied on superficial replication.

OpenAI

The model performed below expectations, particularly on the Excel deliverable. Although its PowerPoint outputs were comparatively stronger, neither deliverable reached a standard sufficient to provide meaningful enterprise value.

| Task | Model | Time | Accuracy | Quality |

|---|---|---|---|---|

| Excel | GPT-5.2 (thinking) | 53' 21" | Abysmal | Good |

| PowerPoint | GPT-5.2 (thinking) | 14' 36" | Good | Poor |

-

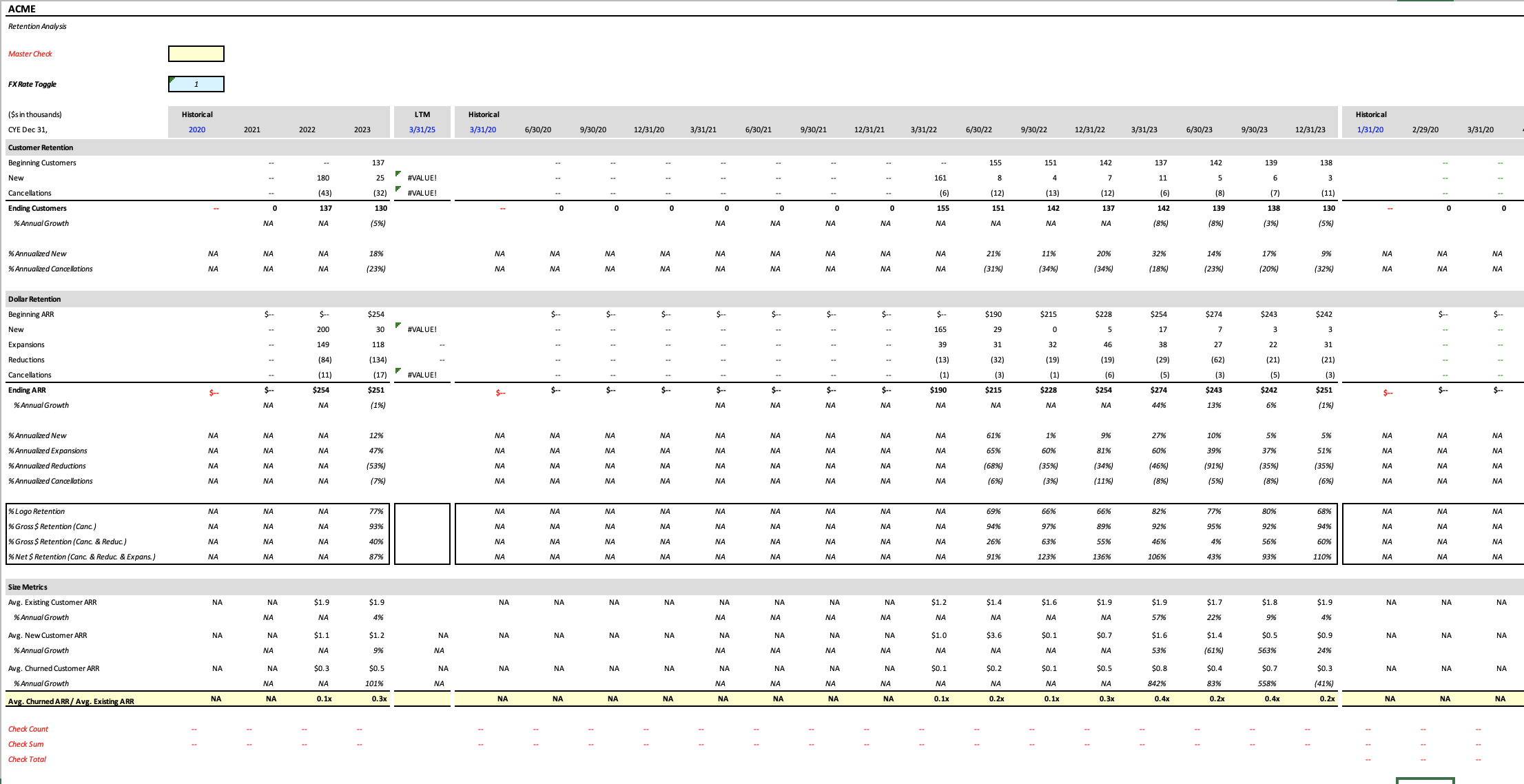

Excel Output

ChatGPT Analysis -

Timing: The time required to complete the excel task was unacceptable.

-

Accuracy: Most cells in the output are blank, references are circular, and LTM calculations give value (#VALUE!) errors.

-

Formatting: The cells appear to have formatting and colors that match the template model.

-

Overall, the output is unusable. Its deficiencies are immediately apparent from the extensive missing data.

-

PowerPoint Output

ChatGPT Presentation -

Design: Correctly selected slides from the template deck, however several elements were not properly edited. There were also unusual overlay annotations to reference the source cells from which commentary was drawn.

-

Commentary: Appears to be adequate, despite being hampered by the system's low quality excel output.

-

References: The screenshot captured far more of the worksheet than the prompt specified. The arrows to referenced cells improved traceability of commentary.

-

This presentation remains far from email-ready, and no analyst would be comfortable sending it without substantial revision.

Anthropic

Claude performed better than expected. Although the output was inaccurate, it was presented with enough plausibility to seem credible, which may be more concerning than an output that is clearly wrong.

| Task | Model | Time | Accuracy | Quality |

|---|---|---|---|---|

| Excel | Sonnet 4.2 | 19' 36" | Close | Fine |

| PowerPoint | Sonnet 4.2 | 12' 09" | Good | Fine |

-

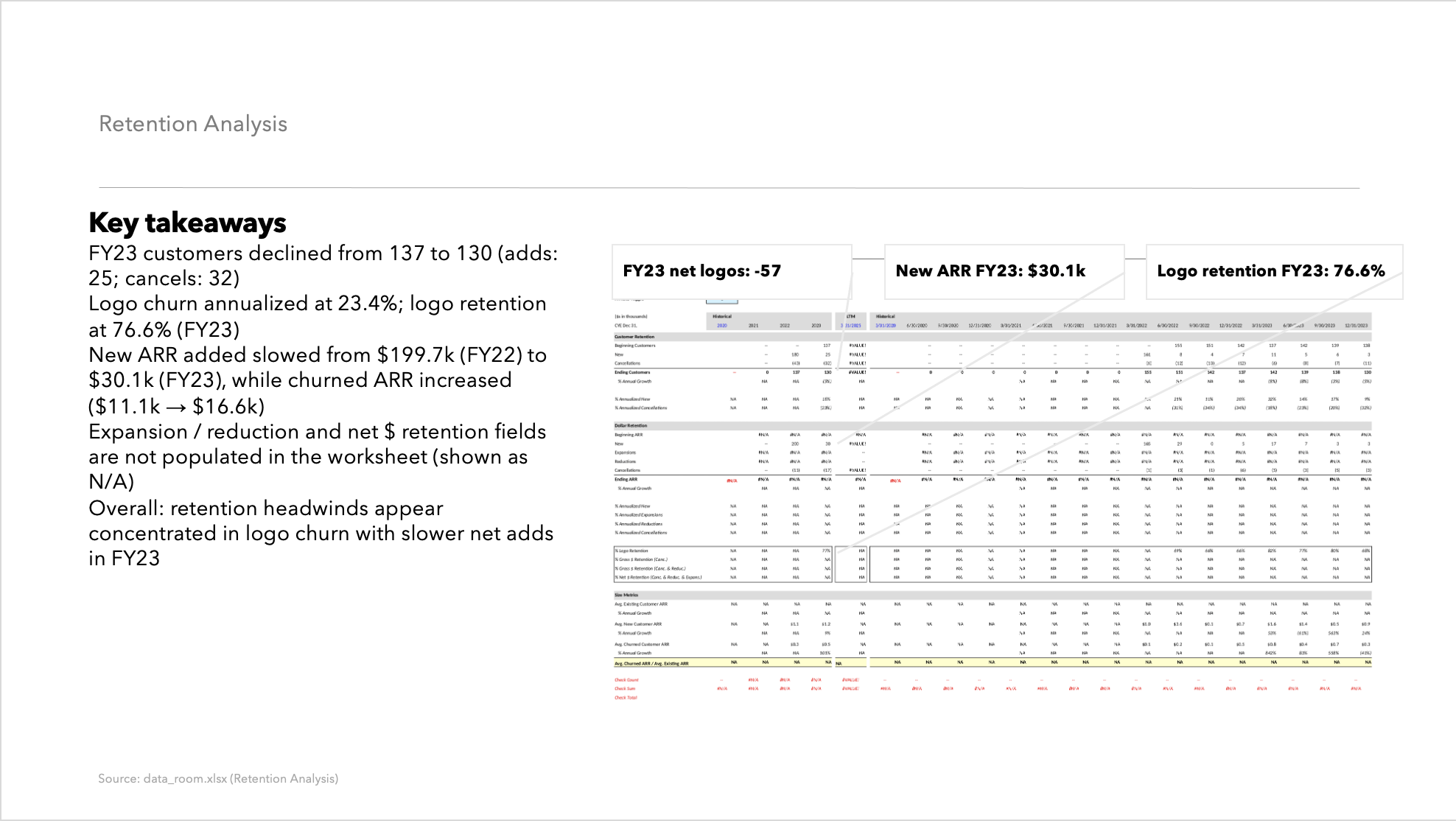

Excel Output

Claude Analysis -

Timing: Finished in less than half the time of ChatGPT and overall this is reasonably acceptable, especially for users who are not familiar with running these analyses.

-

Accuracy: The customer retention figures were consistently off by one period, while the dollar retention metrics exhibited substantial error, with deviations ranging from threefold to sixfold.

-

Formatting: The output appears to be correctly formatted overall, but the formatting of empty cells suggests that the template was copied over without being fully adjusted to reflect the new data.

-

This deliverable is dangerous in that it appears credible despite being inaccurate. Most cells contain formulas that resemble those in the template model, but the resulting values are incorrect, often by substantial margins.

-

PowerPoint Output

Claude Presentation -

Design: Correctly chose and edited existing slides. Although a few stray elements remained on the page, these appear minor and could be resolved manually.

-

Commentary: Thorough commentary that benefits from effective formatting, clearly highlights key takeaways, and coherently presents the model's results.

-

References: Accurately captured a high-quality screenshot of the requested data.

-

At first glance, this deliverable appeared underwhelming but after minor cleanup, its strengths became more evident, thanks to an accurate screenshot of the model and quality commentary.

Google refused to generate both the Excel and PowerPoint deliverables, citing an inability to produce downloadable documents.

| Task | Model | Time | Accuracy | Quality |

|---|---|---|---|---|

| Excel | Gemini 3 | 0' 3" | N/A | N/A |

| PowerPoint | Gemini 3 | 0' 2" | N/A | N/A |

-

Excel Output

Gemini Analysis -

PowerPoint Output

Gemini Presentation

Altitude

Altitude is designed to address the shortcomings evident across these providers. The platform builds off of existing client materials, using the formulas, models, and presentations they upload to generate deliverables exactly as an analyst would. Each step is fully traceable, enabling immediate confidence in the output, and because deliverables are produced as editable .xlsx and .pptx files, users can review, refine, and adapt them without friction.

| Task | Model | Time | Accuracy | Quality |

|---|---|---|---|---|

| Excel | Sherpa | 6' 37" | Perfect | Outstanding |

| PowerPoint | Sherpa | 5' 14" | Exact | Sublime |

-

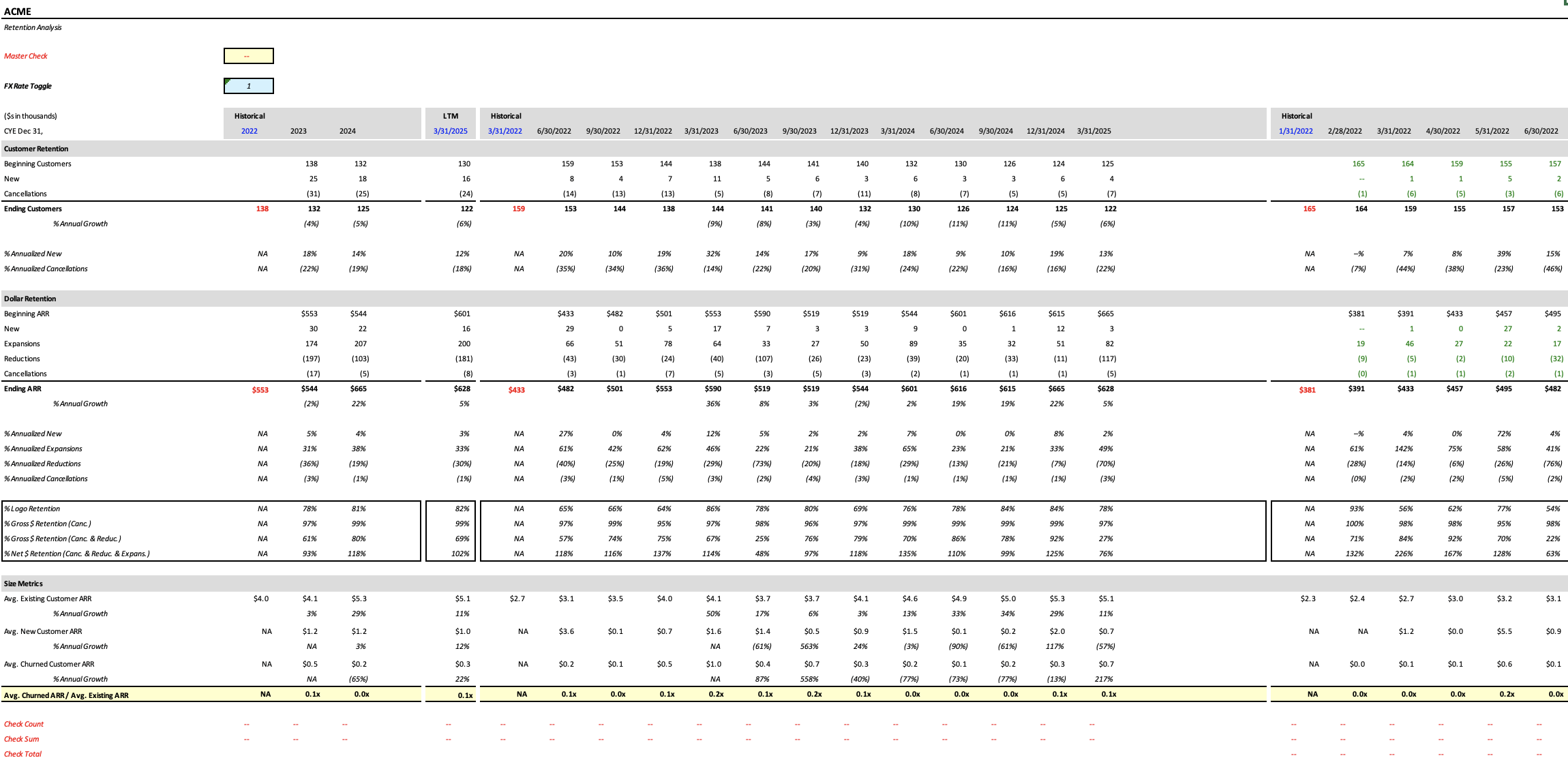

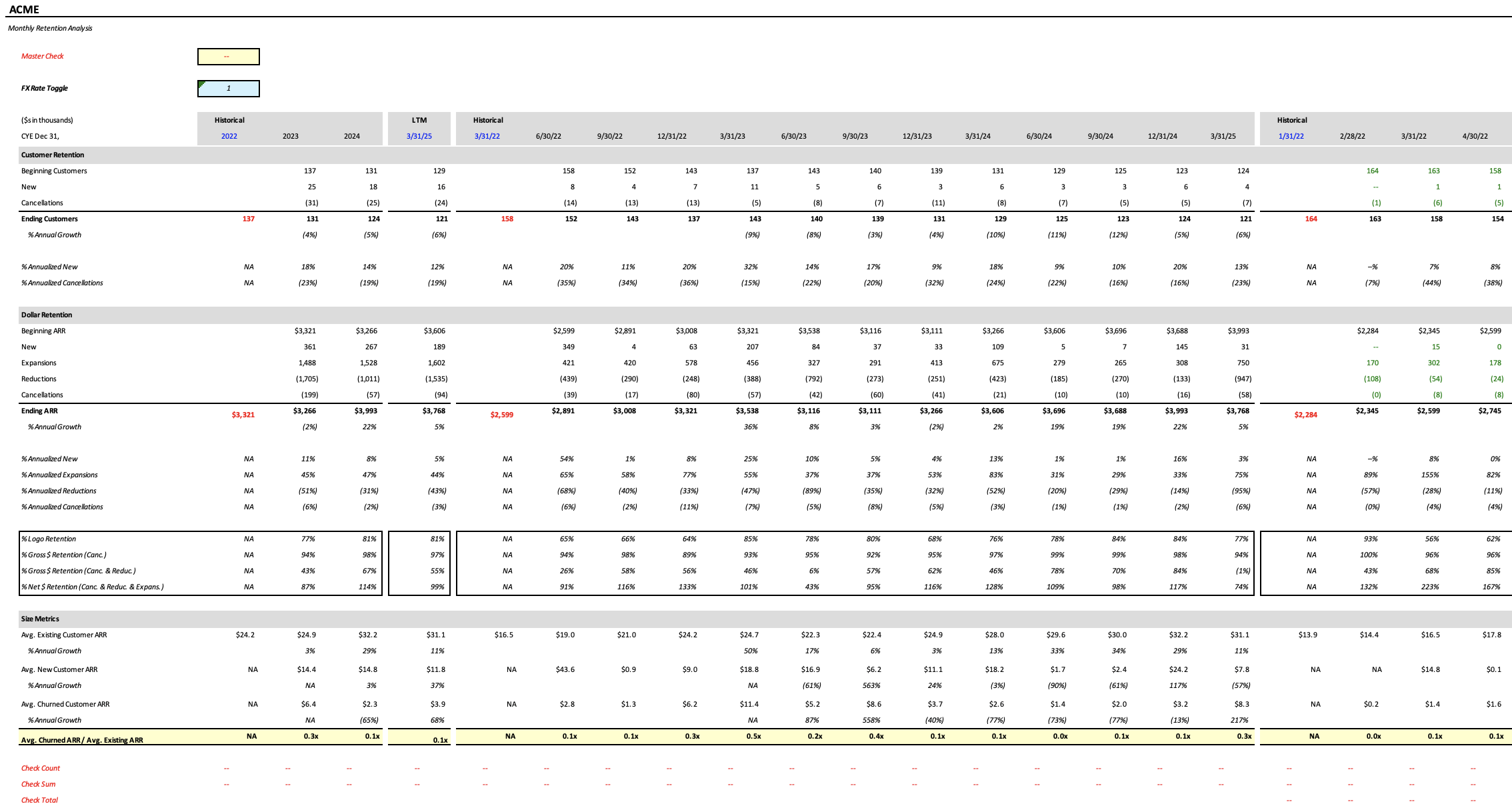

Excel Output

Altitude Analysis -

Timing: Substantially faster than all other competitors.

-

Accuracy: Perfectly accurate, and if it's ever not, built-in checks make inaccuracies immeadiately apparent.

-

Formatting: Consistently formatted the same way every single time.

-

This deliverable does not compromise on quality, and was generated significantly faster than a human analyst could have produced it. The handoff to an analyst is seamless, and after review, the output is fully email-ready.

-

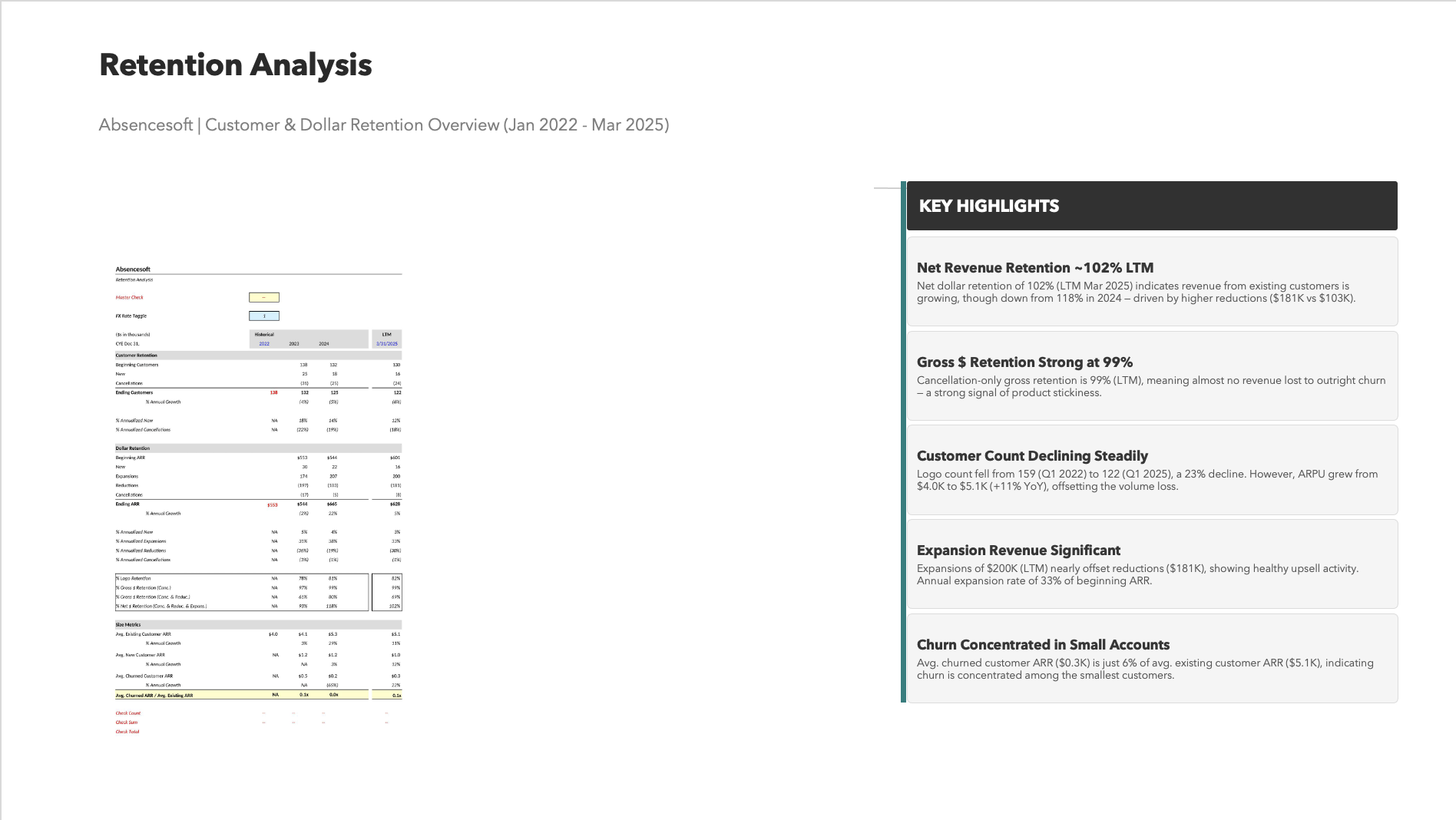

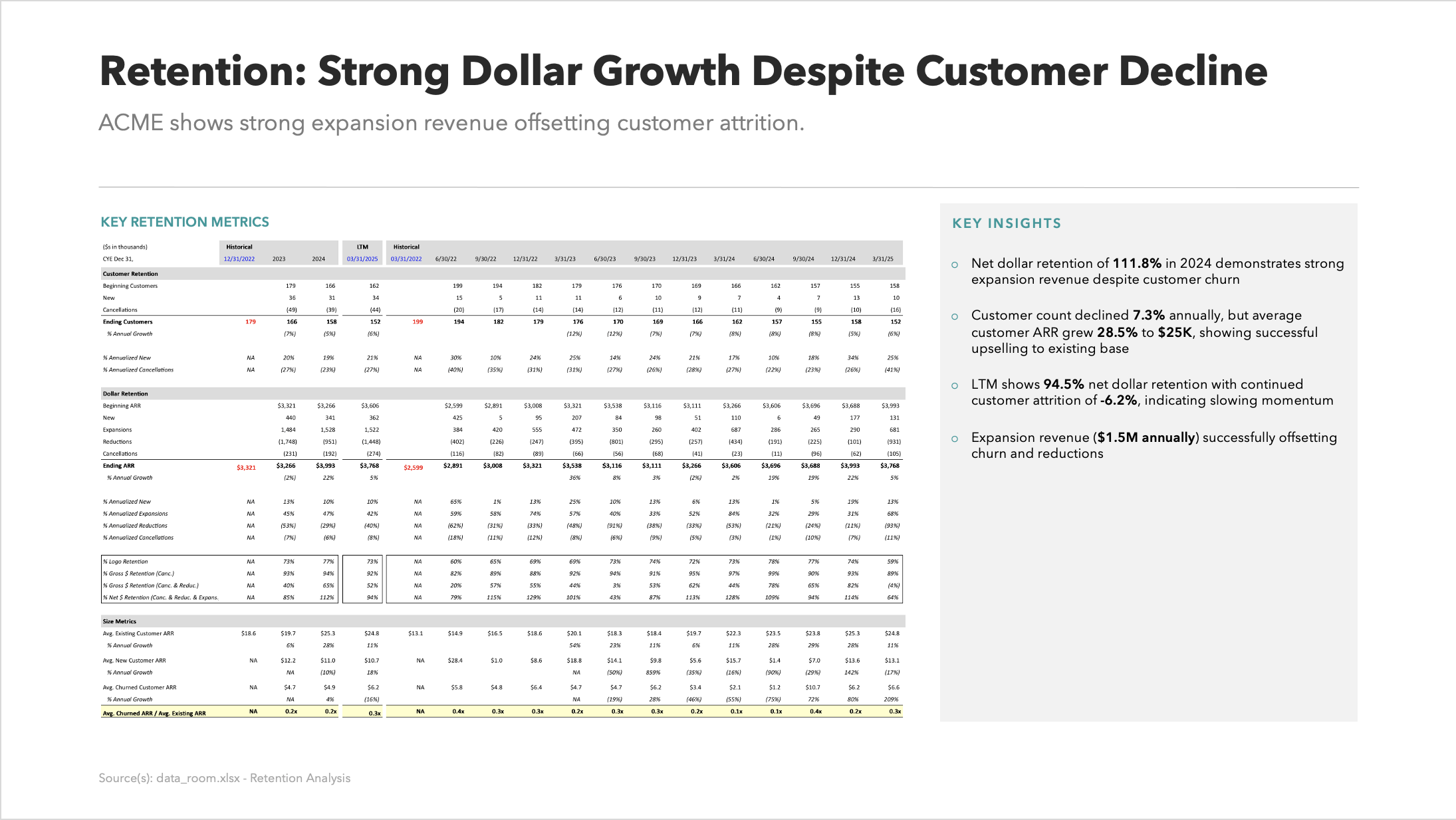

PowerPoint Output

Altitude Presentation -

Design: Directly pulled and edited template slides.

-

Commentary: Relevant and insightful discussion about key findings from the models.

-

References: Accurate and fully traceable.

-

The PowerPoint provides an effective summary of the analytical work completed in the model and serves as a clear, polished synthesis of the workflow’s findings.

Summary

| Provider | Model | Excel | PowerPoint | Timing | Usable |

|---|---|---|---|---|---|

| OpenAI | GPT-5.2 (thinking) | Incomplete | Clean | 67' 57" | No |

| Anthropic | Sonnet 4.2 | Inaccurate | Rough | 31' 45" | No |

| Gemini 3 | No Attempt | No Attempt | 00' 05" | No | |

| Altitude | Sherpa | Excellent | Polished | 11' 51" | Yes |

Altitude’s capabilities far exceed those of any major model provider, thanks to a system design that prioritizes traceability and is purpose-built for financial analysts.

Major model providers cannot reliably generate deliverables of meaningful value for financial teams. Firms instead need a platform like Altitude, which integrates seamlessly into existing workflows and accelerates execution without compromising accuracy, quality, or existing processes.

If you want to run this experiment for yourself, schedule a demo here.